Making a Web Service using Claude Code - a Better Way

In my previous post, I described a naive attempt to make a web service for a fictional bicycle store using Claude Code. While it fulfilled the basic requirements, it wasn’t all that close to being something that could be published.

If that’s the wrong way to go about AI Agent assisted development, what’s the right way? I tried the same project with a more active approach, incorporating some of the best practices described in the Best Practices for Claude documentation.

Planning the project: a conversation with Claude

In its recommendation, Explore first, then plan, then code, Anthropic suggests to start with an explore step, allowing Claude to read files in the project and ask questions. As this is a greenfield project, I moved on to the next step, plan.

Before writing any code, I put Claude Code in Plan Mode and asked it to plan the work I needed it to do.

Plan a web service for a Bicycle Shop to track its customers and orders. Create a new database with example data for us to use in development that matches the schema we will use for production data. The code needs to be production ready. This project will have the application code but will not implement the deployment details. As part of this project, generate a typescript client SDK for the web service, stored in a folder on this project for now but capable of being deployed separately. Use test-driven development. Remember to include validation, logging, and authentication.Part of Claude Code Best Practices is to Course Correct Early and Often. While planning this greenfield project, that meant identifying possible problems and solving them through productive conversations with Claude.

First, Claude suggested using a Fastify web service with an OpenAPI spec. In previous sessions I had been working with Claude to write a Hono web service, so I asked it why it suggested Fastify this time.

Is there a reason that you have suggested Fastify over Hono?Claude considered the trade offs and suggested that using Hono RPC to generate a client made it an appealing option.

Good question. Fastify was chosen for:

Maturity — battle-tested since 2016, large ecosystem

Built-in Pino — structured logging with zero config

Plugin encapsulation — clean separation of concerns

@fastify/swagger — well-established OpenAPI generation

Hono is a legitimate alternative, and arguably better for this project in one key way: Hono's RPC mode (hono/client) can auto-generate a fully type-safe client directly from the server route definitions. That would significantly simplify (or eliminate the need to hand-build) the TypeScript SDK you requested — the types flow from server to client automatically.

Other Hono strengths relevant here:

@hono/zod-openapi integrates Zod + route schemas + OpenAPI in one step (cleaner than the Fastify + zod-to-json-schema combination)

More modern, ergonomic TypeScript DX

Multi-runtime by default (Node 24 now, edge/Bun later if needed)

Trade-offs vs Fastify:

Younger ecosystem, fewer production case studies

Pino logging requires manual wiring (no built-in equivalent)

Plugin system less mature

My recommendation: If the auto-generated SDK via Hono RPC appeals to you, switching to Hono is worth it — it would make the SDK more robust and eliminate type drift between server and client. If you prefer a more established framework with less "new territory," Fastify is the safer choice.However, I don’t think RPC is a good fit with the OpenAPI specification. And we can generate a client using the OpenAPI spec. So I made the same point to Claude.

If we're using OpenAPI specification, should we be using Hono RPC or should we be generating a client from the OpenAPI spec?Claude suggested using the OpenAPI specification over the RPC functionality.

These two approaches are mutually exclusive in practice — you should pick one, and given your requirements, generating from the OpenAPI spec is the better fit.

Why not both / why not Hono RPC:

Hono RPC works by importing the Hono app's type definition directly into the client. That means the SDK has a compile-time dependency on the server source code. That's fine in a monorepo where server and SDK are always versioned together, but it contradicts your goal of an SDK that can be deployed separately — a consumer of the published @bikestoreapi/sdk package would need to pull in Hono as a peer dependency and share the server's type exports.

OpenAPI generation is the right approach here because:

The spec becomes a stable, versioned contract between server and SDK

The SDK is a true standalone artifact with no server code dependency

Consumers in other languages (Python, C#, etc.) can also generate clients from the same spec

@hono/zod-openapi generates the spec automatically from your Zod schemas, so there's no manual spec maintenance

Recommended toolchain:

Role Tool

Server framework Hono with @hono/zod-openapi

Spec generation Auto from @hono/zod-openapi at GET /api/v1/openapi.json

SDK generation @hey-api/openapi-ts — generates a typed client from the spec

SDK HTTP layer @hey-api/client-fetch (pairs with the above)

The flow becomes: Zod schemas → OpenAPI spec → generated SDK — one source of truth, no drift.

Would you like me to update the plan to use Hono + @hono/zod-openapi with a generated SDK via @hey-api/openapi-ts?However, should we be using Hono still if we are using OpenAPI instead of RPC? If we could use OpenAPI with Fastify that would seem to eliminate the justification for using Hono.

If we are using the OpenAPI spec to generate the SDK, is Hono still a better choice than Fastify?Claude admitted that Fastify would be the better choice, given this observation.

Honestly, no — without RPC, the case for Hono weakens considerably. Let me be direct:

The main remaining Hono advantage was @hono/zod-openapi — Zod schemas define routes and generate the spec in one step. That's genuinely cleaner than Fastify's story.

But Fastify has closed that gap with fastify-type-provider-zod, which lets Zod schemas work natively as Fastify's type provider. Combined with @fastify/swagger, the Zod → OpenAPI pipeline is now comparable.

What Fastify still wins on:

Concern Fastify

Logging Pino built-in, zero config, request IDs, structured JSON out of the box

Maturity 8+ years, battle-tested at scale, larger community

Auth @fastify/jwt is well-established and deeply integrated

Plugin system Encapsulation model is well-suited to this project's module structure

Ecosystem More plugins, more Stack Overflow answers, more production examples

Where Hono still leads (without RPC): multi-runtime support (edge/Bun/Deno) — relevant only if you anticipate deploying to those targets, which you haven't mentioned.

Recommendation: Stick with Fastify. The original reasoning holds once RPC is off the table. The SDK will be generated from the OpenAPI spec regardless of which framework produces it.

Should I update the plan to confirm Fastify + fastify-type-provider-zod + @fastify/swagger as the stack, with the SDK generated via @hey-api/openapi-ts?That makes a lot more sense. However, I’m pretty sure that Scalar is preferable to Swagger for new projects.

Has Swagger been replaced by Scalar at this point?Claude recognized the growing preference for Scalar over Swagger.

Scalar hasn't formally replaced Swagger UI — Swagger UI is still maintained and widely used — but Scalar has become the preferred choice for new projects as of 2024-2025. It's significantly more polished, with a much better UI and developer experience.

The important distinction is that these are just the documentation UI layer — the spec generation is separate:

Concern Package

OpenAPI spec generation @fastify/swagger (unchanged, still the standard)

Documentation UI @scalar/fastify-api-reference instead of @fastify/swagger-ui

So the change is minor: drop @fastify/swagger-ui, add @scalar/fastify-api-reference. The spec is still generated the same way and served at /api/v1/openapi.json — Scalar just consumes it.

Scalar also has a nice side effect for your SDK: its UI has a built-in API client (like Postman in the browser) which makes manual testing during development easier.

Should I update the plan to use Scalar instead of Swagger UI?With that change, the bones of the project were in place.

Make it better with idempotency protection

I had a web service planned at this point, but a few changes could get it somewhere closer to production ready. What happens if the user accidentally submits the same order twice? I needed to add idempotency protection to the web service, so I asked Claude what calls should be idempotent.

I think we need to consider making the Create Order calls idempotent. Are there any other calls that should be idempotent? What's the best way to go about that?Claude pointed out where idempotency protection was needed and began to implement it.

Great consideration. Beyond POST /orders, here's the full picture:

Endpoints that need idempotency protection:

POST /orders — most critical: duplicate = double stock decrement + double charge

POST /customers — partially protected by unique email constraint, but a network retry before the constraint error reaches the client can still create duplicates in some edge cases

POST /products — same reasoning; SKU constraint helps but idempotency is cleaner

Endpoints that are already naturally idempotent:

All GET requests — by definition

PUT /customers/:id, PUT /products/:id — replacing with the same data is safe

PATCH /orders/:id/status — the state machine handles this: sending PROCESSING when already PROCESSING returns a 422, which is correct behavior

DELETE /orders/:id (cancel) — cancelling an already-cancelled order can be made to return 200 with no side effects

The standard approach is Stripe-style idempotency keys: the client generates a UUID and sends Idempotency-Key: <uuid> with the request. The server stores the key + response; replayed requests return the cached response with an Idempotent-Replayed: true header. Keys expire after 24 hours.It asked me whether to store the idempotency keys in the same database as the other data or in a separate Redis cache, so I told it to use the same database, and it updated the plan.

IdempotencyRecord table added to the Prisma schema — stores (key, userId, requestPath) as a unique index so keys are scoped per user and per endpoint, preventing cross-user replay attacks.

idempotency.plugin.ts added to the plugins folder — a Fastify plugin that:

On POST /orders: enforces the Idempotency-Key header (400 if missing)

On POST /customers and POST /products: accepts the header if present

On first request: processes normally, stores response in IdempotencyRecord

On replay: short-circuits immediately, returns cached response + Idempotent-Replayed: true header

Keys expire after 24 hours; an expired key is treated as a new request

Naturally idempotent endpoints (PATCH /orders/:id/status, all GETs, all PUTs) get no changes — they're already safe.

Idempotency tests added to the test scenarios: replay produces no side effects, stock not decremented twice, missing key on orders returns 400, expired keys work correctly, cross-user key isolation confirmed.Make it even better with retry functionality in the SDK

When a web service returns HTTP Status 408, 429, 500, 502, 503, or 504, the client is safe to retry to request on the chance that it is a transient error or a throttling issue. Of course there’s a chance that the error is persistent, or that repeated retries can worsen throttling, so the client has to also limit the number of retries. Exponential backoff can lessen the risk of triggering further throttling errors during retries by waiting progressively longer times between retries, while jitter applies some randomness to the retry timing to reduce the number of simultaneous calls. I asked Claude if it applied retry logic to the SDK.

Do we have any retry functionality built into the SDK yet?It explained that the logic did not exist yet, and how it would implement it.

Not yet — the plan only covers the generated client from @hey-api/openapi-ts, which provides no retry logic out of the box. This is an important gap, especially since the idempotency keys we just designed are only useful if the SDK actually retries with the same key on failure.

Here's what needs to be added:

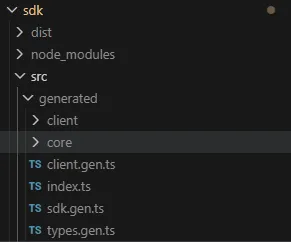

sdk/src/retry.ts — a custom fetch wrapper with exponential backoff + jitter, passed into @hey-api/client-fetch's fetch config option

Retry only on safe conditions: network errors, 408, 429 (with Retry-After header respect), 500, 502, 503, 504

Never retry on 400–409 (except 408) or 410+ — those are deterministic failures

Critical: on retry, the original Idempotency-Key header must be preserved, not replaced — otherwise idempotency is useless

sdk/src/index.ts wires the retry fetch into the generated client at initialization

Default config: 3 attempts, exponential backoff starting at 500ms, max 10s delay, jitter to prevent thundering herd.Adding retry meant hand-writing (Claude considers itself to have hands?) a client wrapper to include the logic, since it wasn’t automatically included in the SDK.

Plan updated. The SDK now has explicit retry support via a hand-written sdk/src/retry.ts module — the only hand-written code in the SDK since everything else is generated. The key design point: because retry.ts wraps the fetch function rather than the generated service methods, it works automatically for all generated calls without any modification to the generated output.

The idempotency + retry pairing now works correctly end-to-end: the caller generates one UUID, passes it as Idempotency-Key, and if the network drops mid-request, the SDK retries with the same key — the server replays the cached response rather than creating a duplicate order.At this point, the plan was complete, so I approved the plan and Claude built the Web Service. Claude ran for about 25 minutes, including some time spent condensing the context when it ran over the context limit, finishing with the complete source code.

Create the documentation

To reduce the amount of context that needs to be provided at the beginning of a session, Claude Code can use a CLAUDE.md file. This file acts similarly to how a README.md file should work for a developer new to a project, giving the context necessary to get up to speed on useful commands in the repository and a high-level view of the architecture. Claude Code provides an init command that will auto-generate this file:

/initClaude generated its CLAUDE.md file based on the code that it had previously created.

CLAUDE.md has been created. It covers:

Commands — dev server, build, lint, test (unit/integration/coverage/single file), all database operations, OpenAPI export, and SDK generation/build

Architecture — request lifecycle, plugin registration order and responsibilities, module structure pattern, error hierarchy, orders state machine and stock transaction pattern, auth token design, and SDK generation flow

Testing — how unit tests mock Prisma, the config mock pattern required for modules that import config, how integration tests work (globalSetup, table truncation, auth token shortcuts), and SDK test patternsWith documentation now available for robots, I needed to add documentation for humans. Additionally the instructions for running the application assumed the existence of a local PostgreSQL instance, while I wanted to run the local instance in a Docker container, so I asked for those instructions to be included in a README.md:

generate a README with instructions for how to generate a local database in a docker imageClaude generated the human documentation with those instructions:

README.md is created. Key decisions:

Uses postgres:16 with a named container so it's easy to stop/start without losing data

Creates both bikestore and bikestore_test in the same container via docker exec — no docker-compose needed

Default credentials in the Docker command match .env.example exactly, so no edits required to get running

Includes the JWT secret generation command inline so it's self-contained

Covers the full flow: container → env files → migrate → seed → runThe first draft

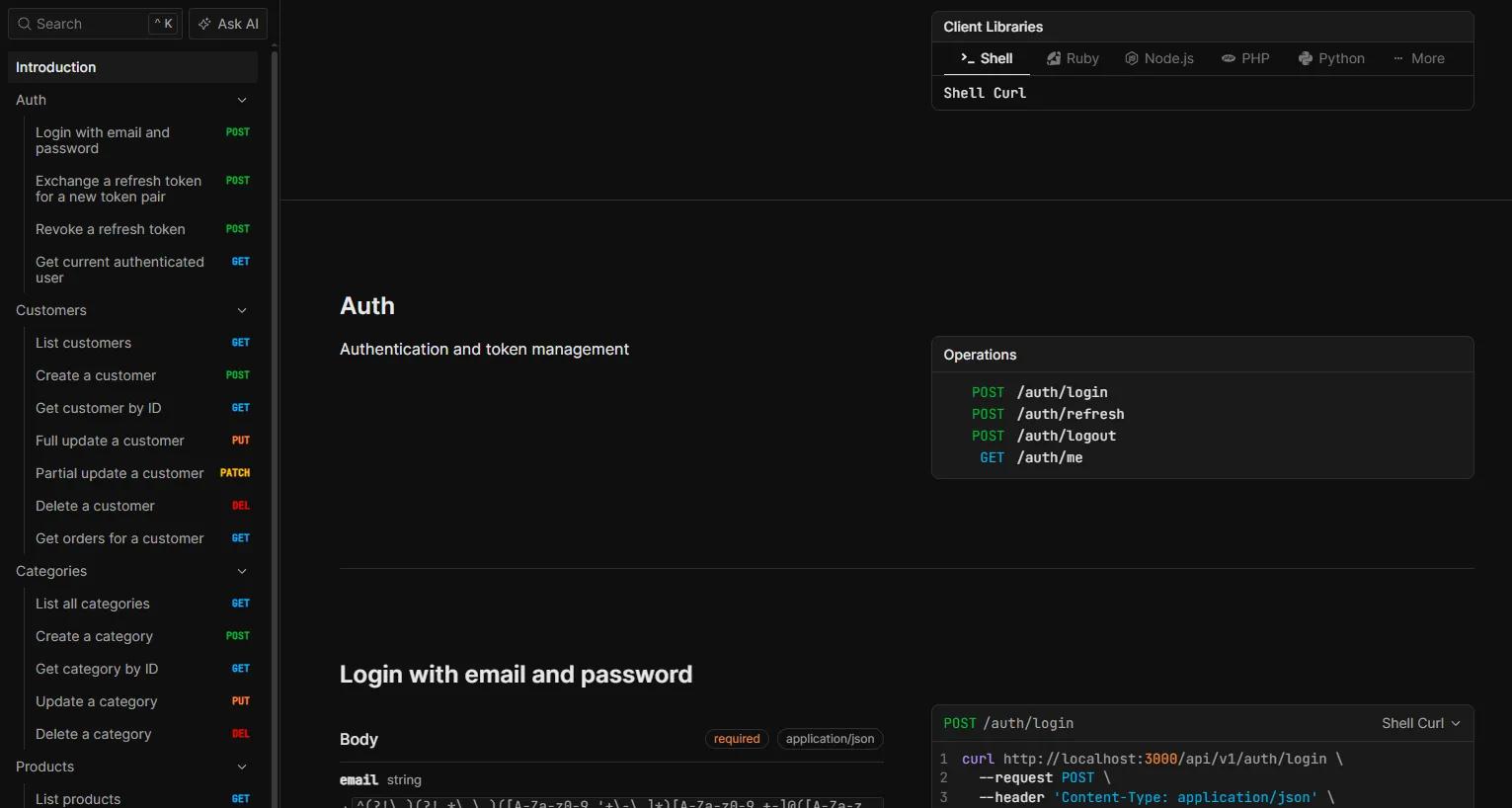

While the implementation in my previous blog post had no testing front end, by including Scalar in this version I now have a UI for testing, rather than just curl calls.

I also had a separate folder that could generate an sdk client from a series of NPM calls:

# at the project root, to generate the openapi.json

npm run export-openapi

# go to the sdk folder

cd sdk

# in the sdk folder, to generate the client types using openapi-ts

npm run generateThis generates the client in a separate SDK folder.

You can view the current state of this web service on my Github account: Bike Store API Repository

Further refinements

There were still things to work through, however. The dependencies were not all up-to-date, and I had some failing integration tests from configuration issues. I had Claude identify the out-of-date dependencies and update them one-by-one. For the failing integration tests I gave Claude explicit instructions to run the integration tests and explain how to fix them, as I often have seen Claude try to explain errors by reading the code rather than running it. Anthropic calls this practice Give Claude a way to verify its work.

run the integration tests and look into why they are not working. Explain the problem and the steps to solve itAt this point, I was satisfied with the code. However, Claude still does not think it is ready for production.

Is this web service production ready?Its response:

Here's an honest production readiness assessment:

What's solid

All modules fully implemented — auth, customers, categories, products, orders have routes + service + schema + tests

All plugins implemented — prisma, jwt, idempotency, error-handler, openapi

TypeScript compiles clean — tsc --noEmit passes with zero errors

35/35 unit tests pass

0 npm audit vulnerabilities

No TODOs or FIXMEs in source code

Core security implemented — JWT + refresh token rotation, bcrypt passwords, role-based access, idempotency

Money handled correctly — Decimal @db.Decimal(10,2) throughout, no floats

Order transactions correct — stock decrement with updateMany guard, cancellation restores stock

Notable gaps before production

Missing HTTP hardening (no packages installed):

No rate limiting (@fastify/rate-limit) — auth endpoints especially

No CORS (@fastify/cors) — needed if any browser client will consume the API

No security headers (@fastify/helmet) — X-Frame-Options, CSP, etc.

No request timeout configuration

Missing operational concerns:

No graceful shutdown (no SIGTERM/SIGINT handler in server.ts)

openapi.json not yet generated — SDK has no generated files yet (scripts exist but haven't been run)

Coverage thresholds fail at 17%/20% vs 80% configured — because unit tests can't cover routes/plugins (those are covered by integration tests which need PostgreSQL). The vitest.config.ts thresholds are misconfigured for a unit-only run.

Infrastructure (out of scope for code, but worth noting):

No Dockerfile / docker-compose

No CI/CD pipeline config

No deployment configuration

Summary

The business logic is production-quality — the core API is complete, secure by design, and well-structured. What's missing is the HTTP infrastructure layer (rate limiting, CORS, security headers, graceful shutdown) that sits around the application logic. Those are roughly 1–2 days of work.

The quickest wins to close the gap: @fastify/rate-limit on auth routes, @fastify/cors, @fastify/helmet, and a SIGTERM handler in server.ts.It’s not quite production ready still, but it’s a great deal closer than in the previous blog post. The web service has auth, testing, and validation, and the client has a retry strategy.

In a coming blog post, I will work through the final necessary changes and deploy the service on my local Kubernetes cluster.

What I learned

Through using Claude Code Best Practices and staying engaged in the creation process, I was able to make a much better web service than when I gave Claude simple instructions in my previous post.

- Explore first, then plan, then code

- Claude made a plan for what it was going to do before it started writing

- Course Correct Early and Often

- When I saw Claude going off course, I redirected it to keep it on track

- Give Claude a way to verify its work

- I told Claude to run the integration tests in order to fix them